Powering the next generationof AI data centers.

High-frequency planar magnetics and solid-state transformer systems for the post-megawatt rack era.

AI is rewriting how data centers are powered.

A single AI training rack now draws over a megawatt — a level of power density that legacy AC distribution architectures were never designed to deliver. The conventional chain of utility transformer, UPS, PDU, and server PSU loses 12–15% of input power before it ever reaches the chip. At hyperscale, those losses translate into hundreds of megawatts of waste heat and stranded capacity.

The industry consensus is forming around a new architecture: medium-voltage AC delivered directly into the data hall, converted in a single stage to 800V DC, and distributed natively to GPU racks. NVIDIA Vera Rubin, hyperscaler reference designs from Google and Meta, and product roadmaps from Eaton, Vertiv, Delta, and SolarEdge all converge on this approach.

This is the largest architectural change in data center power distribution in three decades.

1.25 / 5 MW per rack. 800 V DC.The math leaves no choice.

At 48V — today's rack distribution standard — a 1.25 MW rack would draw 26,000 amps; a 5 MW rack, 104,000 amps. The copper required is physically incompatible with the rack envelope. The I²R losses turn the cabling into a heating element. This is not a cost problem. It is a problem of feasibility.

At 800V, the 1.25 MW rack draws 1,560 amps; the 5 MW rack, 6,250 amps. I²R losses drop by 256×. Conductor mass drops by ~45%. Twenty percent of the rack volume is returned to compute.

The post-megawatt rack and 800V DC are not two trends. They are one physical inevitability.

The grid runs on a different clock than AI compute.

A modern AI cluster is built and obsoleted on a 24-month cycle. A grid interconnection takes five to ten years. By the time a hyperscale gas plant clears regulatory approval, two generations of GPUs have been deployed and one has been retired. This is not an execution gap. It is a structural mismatch.

The only power architecture that operates on AI's clock is local: photovoltaic generation, on-site storage, medium-voltage DC distribution, and a single-stage SST as the central conversion node. Solar and storage follow Wright's Law — they get cheaper at the same rate as semiconductors. Compute and power, for the first time, share the same cost curve.

The next generation of AI infrastructure will not wait for the grid. It will build its own.

Three architectures.One that doesn't violate physics.

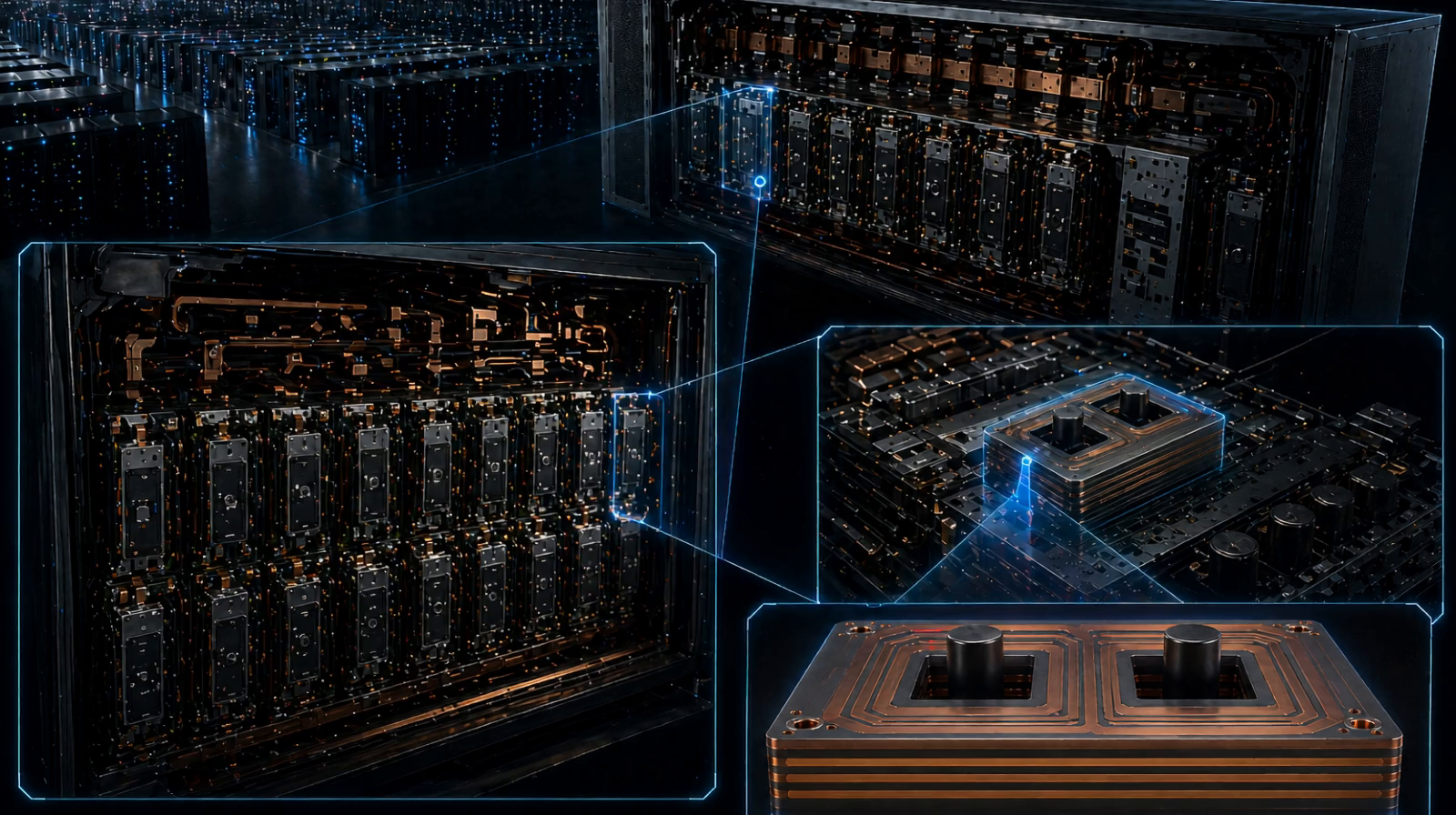

Centralized DC-link SST puts every disturbance into a single bulk capacitor — power density saturates and reliability degrades with scale. Parallel DAB modules avoid the central capacitor but trade it for a control coordination problem that compounds with every added port. Both architectures hit a wall before reaching multi-megawatt rack density.

Modular Magnetic Coupled Converter (MMCC) takes a different approach: low-frequency power exchange happens through a shared magnetic core, not through capacitors or control loops. Adding a port means adding a winding, not adding a system. Faults distribute through magnetic flux redistribution rather than propagating through a shared bus.

We did not choose MMCC because it is novel. We chose it because every other architecture violates a physical constraint at the scale AI compute now requires.

The bottleneck of SST is not the topology.It is the magnetics.

Every MMCC topology has been published in academic papers. Every major power electronics group from ABB to ETH Zurich to Tsinghua has demonstrated lab prototypes. None has put it into volume production. The wall is not in the circuit. It is in the medium-frequency transformer.

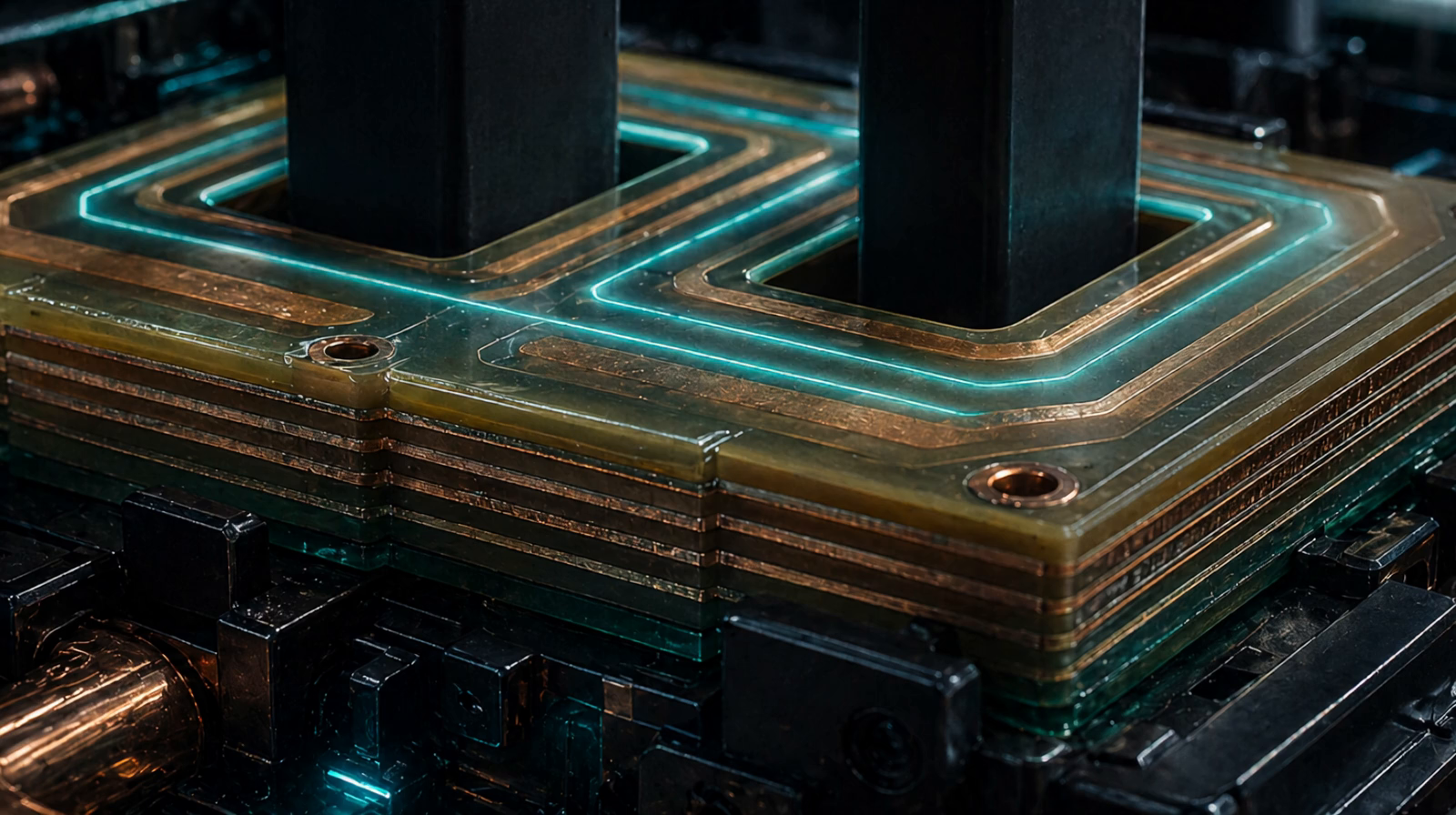

A 200kHz multi-port magnetic must hold multi-kilovolt insulation, sub-picocoulomb partial discharge, hundreds of amps of high-frequency current, and tight thermal envelopes — all in a manufacturable, repeatable, ratable form factor. Conventional epoxy-cast magnetics cannot operate at this frequency. Conventional PCB planar magnetics cannot operate at this power. The lab samples that exist were each hand-built by senior engineers; their parameters do not survive transfer to a production line.

This is the discipline that decides who ships an SST and who publishes one.

We started with the magnetics. The system followed.

SmartonEPower began with the single hardest component in any solid-state transformer: the high-frequency, medium-voltage power transformer. From that core, we extended into MMCC system topology, control architecture, and integrated SST modules — each layer engineered for the manufacturability constraints that have kept SST in the lab.

22 kW per module · 200 kHz · medium-voltage insulation

A planar magnetic platform engineered for power, frequency, and dielectric strength simultaneously — using polyimide-substrate windings, multilayer thick-copper construction, and proprietary insulation geometry. Designed for repeatability on a production line, not for a single lab demonstration.

Multi-port · magnetically coupled · modular SST

Energy exchange routed through a shared magnetic core rather than a central capacitor or bus. Modules are added by adding ports, not by adding systems. The architecture has been technically reviewed by leading hyperscale data center infrastructure teams.

Our methodology, in your browser.

How we engineer megawatt-class magnetics is the same way we engineer the small ones — by sweeping the design space at scale. We've opened the small-power planar magnetic optimizer at smartonep.com/ai so engineers can run the same Pareto-front loop we use internally.

Every design is generated from electrical, safety, and mechanical specs, then evaluated against a multi-objective fitness function — total loss vs. cost — across thousands of candidates.

No single "best" — the tool returns the trade-off frontier so you pick the operating point that fits your constraint, not ours.

The hosted tool covers planar transformers up to a few hundred watts. Higher-power, MV-isolated designs remain proprietary engineering.

What we build defines what the next data center can do.

We are hiring engineers who can buildwhat hasn't been built yet.

SmartonEPower is hiring across power electronics, magnetic design, high-voltage engineering, and systems integration. We look for engineers and researchers who have worked at the frontier of medium-voltage power conversion — at companies like ABB, Hitachi Energy, Tesla, Delta, and Sungrow, or in research programs at ETH Zurich, NCSU FREEDM, Tsinghua, and Virginia Tech CPES.

If you have built or want to build the hardest components in the next generation of energy infrastructure, we want to talk.